TF - IDF Method

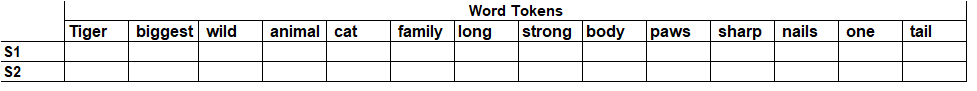

In my last blog we have discussed about how can we create the bag of words using python [refer this link CreatingBag-of-Words using Python ]. Now we have seen that bag-of-word approach is purely dependent on the frequency of words. Now let’s discuss another approach to convert the textual data into matrix format, it called us TF-IDF [Term Frequency – Inverse document Frequency] and it is the most preferred way used by most of data scientist and machine learning professionals. In this approach we consider a term is relevant to document if that term appears frequently in the document and term is unique to document i.e. term should not appear in all the documents. So its frequency considering with respect to all documents should be small and term frequency for specific document should be high. TF-IDF score is calculated as follows: Term frequency of a term (t) in a document (d). Inverse document frequency of a term Below are formulas for calculating the and...